Guardian Watch

AI & Input Systems Prototype (Unreal Engine 4)

Project Type: Exploratory AI & Input Systems Prototype

Engine: Unreal Engine 4

Focus: Voice-driven AI commands · EQS-based stealth behaviour

Status: Prototype / R&D

This prototype was developed as an internal exploration into AI perception systems in Unreal Engine.

Overview

AI interaction systems are often menu-driven and rigid, limiting experimentation and slowing player intent. Similarly, stealth AI frequently relies on scripted behaviour rather than perception-based decision making.

Guardian Watch is an exploratory Unreal Engine 4 prototype built to investigate two questions:

Can AI be commanded more naturally through voice input?

Can stealth behaviour emerge dynamically using Unreal’s Environmental Query System (EQS)?

The project focused on rapid technical validation rather than production delivery. Its purpose was to test feasibility, identify engine constraints, and explore modular AI behaviour design in a controlled environment before scaling.

Links

Approach

Exploration & Constraints

We began by exploring Unreal Engine 4’s built-in voice input system to understand its capabilities and limitations. Early testing focused on identifying feasibility, responsiveness, and integration constraints, particularly given the lack of engine source access and the experimental nature of the feature.

This phase defined the technical boundaries within which the prototype would operate.

System Design

1

Prototype Implementation

Validation & Learnings

2

With constraints established, we designed a modular AI architecture using Behaviour Trees and Unreal’s Environmental Query System (EQS). The goal was to separate input, decision-making, and behaviour, allowing AI logic to remain flexible and extensible as new interactions were tested.

Both guard and intruder NPCs were designed around perception-driven decisions rather than scripted paths.

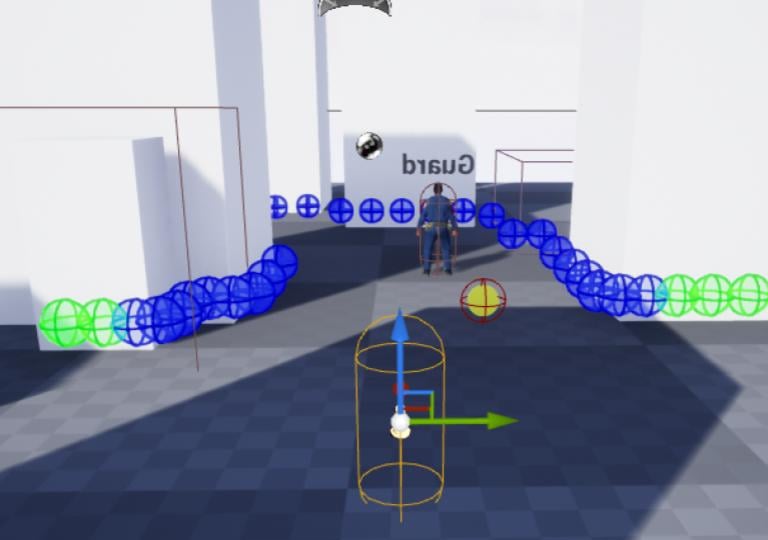

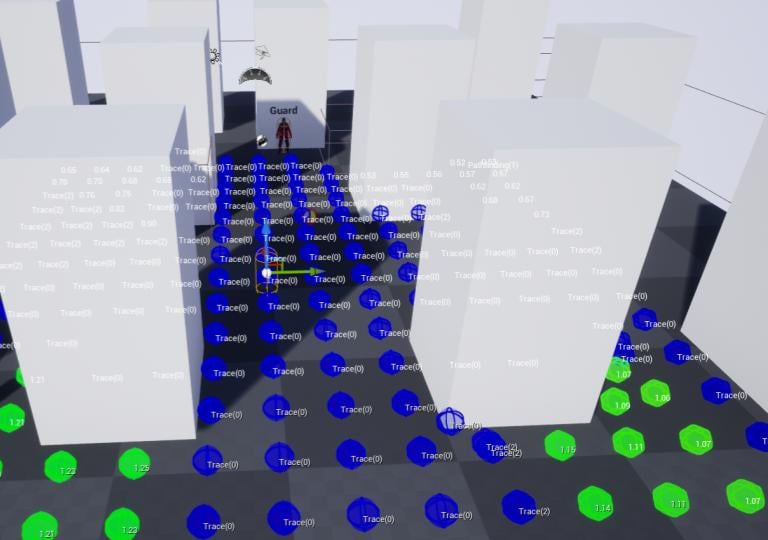

We implemented voice-triggered AI commands and integrated them into the AI decision layer. Guards could respond to player-issued commands, while intruders used EQS scoring to evaluate cover, visibility, and movement options in real time.

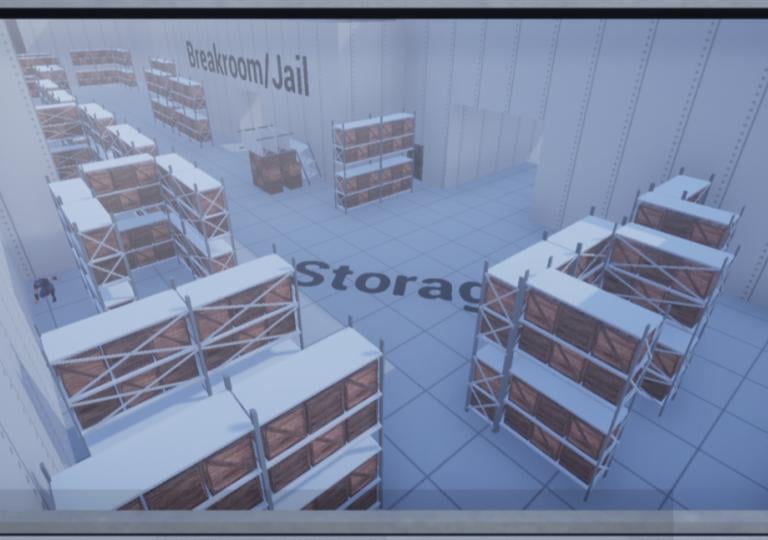

The system was iterated rapidly within a controlled test environment to observe behaviour under varying conditions.

The prototype was validated through repeated gameplay scenarios, focusing on AI responsiveness, behavioural consistency, and emergent outcomes. This phase highlighted which interactions felt viable, which systems scaled well, and where engine-level limitations imposed hard constraints.

Findings from this phase informed both immediate refinements and longer-term recommendations for future implementations.

3

4

AI perception radius debug view

Navigation + detection testing

Level layout used for validation

Outcome

The Guardian Watch prototype successfully validated perception-driven stealth behaviour using Unreal’s Environmental Query System (EQS), demonstrating its effectiveness for dynamic cover selection, avoidance, and movement decisions.

Additional outcomes included:

Confirmation that voice-triggered commands could be integrated into AI decision-making workflows, within the limits of UE4’s experimental input support.

Validation of a modular AI architecture that enabled rapid iteration and behavioural experimentation.

Clear identification of engine-level constraints impacting scalability and long-term production readiness.

Rather than targeting polish or performance metrics, the project prioritised learning and system validation, producing actionable insight into which approaches were viable and which required alternative solutions.

Evaluation & Recommendations

The Guardian Watch prototype provided valuable insight into the strengths and limitations of experimental AI systems within Unreal Engine. While the core architecture proved effective for rapid iteration and behavioural testing, certain engine-level constraints limited scalability and long-term viability.

Based on the findings from this prototype, future implementations would benefit from:

Replacing deprecated or experimental input systems with modern, extensible speech-to-intent solutions.

Expanding EQS scoring to incorporate additional sensory inputs, such as audio cues and dynamic environmental factors.

Migrating the system to UE5-native AI workflows to improve flexibility, tooling support, and long-term maintainability.

Preserving the modular separation between input, decision-making, and behaviour to support ongoing experimentation.

These recommendations reflect a focus on reducing technical risk early, validating systems before scaling, and making informed architectural decisions aligned with production realities.